You've shipped your LLM-powered feature. Users are starting to interact with it. And then the cracks show up: wrong answers, hallucinated facts, responses that completely miss what the user was asking. Sound familiar?

This is the reality for most teams building with large language models. The models themselves have become incredibly capable, but capability without evaluation is just confidence without evidence.

Gartner predicts that through 2026, organizations will abandon 60% of their AI projects because of the unavailability of AI-ready data. That number should stop you in your tracks.

If you’re serious about shipping AI that works, evaluation isn’t optional. It’s the whole game. Evaluation failures rarely announce themselves. A RAG system can show strong answer relevancy scores while faithfulness quietly collapses after new documents are added, outputs that sound right but point to the wrong sources. A prompt tweak that improves tone can drive hallucination rates up at the same time, and you won’t know until users start flagging wrong answers in production

What Are LLM Evaluation Tools and Why They Matter

LLM evaluation tools are frameworks and platforms that measure how well a large language model performs on real-world use cases and tasks. They go beyond checking if a model gives the ‘right’ answer and assess things like factual accuracy, response relevance, toxicity, bias, coherence, and how faithfully a model follows instructions.

Think of them as the QA pipeline for AI. Just like you wouldn’t ship software without testing it, you shouldn’t deploy an LLM application without understanding where it breaks down and under what conditions.

Here’s why this matters more than most teams realize:

- LLMs are probabilistic. The same input can yield different outputs across runs. Without evaluation, you’re flying blind on consistency.

- They hallucinate. Without measuring hallucination rates in your specific context, you have no idea how often your model is confidently making things up.

- They degrade. Prompt updates, model version changes, and new data can silently break performance that was previously solid.

Good LLM testing practices are what separate a polished AI product from a liability. Evaluation tools make those practices repeatable, traceable, and scalable. See how our LLM Testing & Evaluation Strategies for High-Quality AI Applications pair with the right evaluation tools to ship AI you can actually trust

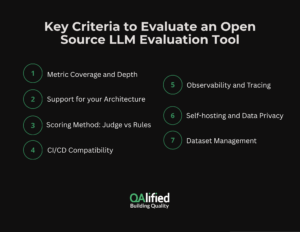

Key Criteria to Evaluate an Open Source LLM Evaluation Tool

Not all evaluation frameworks are built the same. Before you commit to one, stress-test it against these criteria:

- Metric coverage and depth. They go beyond checking if a model gives the ‘right’ answer, measuring what matters in production: correctness against verified sources, answer relevancy, task completion, hallucination rate, bias, and toxicity. For a deeper look at how these are measured, see our guide on Key LLM Evaluation Metrics & Techniques for Reliable Models.

- Support for your architecture. Are you building an RAG pipeline? A conversational agent? A summarization system? Some tools are purpose-built for RAG evaluation. Others are more general. Mismatches here waste time.

- LLM-as-a-judge vs. rule-based scoring. LLM-as-a-judge approaches offer flexibility for subjective quality checks but come with cost and latency tradeoffs. Rule-based checks are faster and cheaper but less nuanced. The best tools give you both.

- CI/CD compatibility. An evaluation that only happens manually is an evaluation that gets skipped. Look for tools that slot into your existing deployment pipeline so every code check-in or merge triggers a check.

- Observability and tracing. Can you trace what happened during a specific LLM call? Can you see the full chain of prompts, retrievals, and outputs? Without this, debugging failures becomes guesswork.

- Self-hosting and data privacy. For teams with compliance requirements, the ability to run evaluations on-premises is non-negotiable. Open-source tools that support self-hosting give you full control over where your data goes.

- Dataset management. Can the tool help you build, version, and manage evaluation datasets over time? This is what turns one-off evaluations into a continuous quality improvement loop.

If a tool cannot clearly explain why a model failed in a specific test case, it is not useful in production. Most teams over-rely on LLM-as-a-judge and ignore dataset quality. In practice, weak or outdated evaluation datasets cause more failures than the choice of tool itself.

Top Open Source LLM Evaluation Tools

| Tool | Best For | Metrics | RAG Support | Agent Support | Self-Hostable | CI/CD Ready |

|---|---|---|---|---|---|---|

| DeepEval | Unit-test-style evals | 14+ metrics | Yes | Yes | Yes | Yes |

| Langfuse | Observability + tracing | LLM-as-judge + custom | Partial | Yes | Yes | Yes |

| ArtificialQA | Enterprise QA for generative AI applications | 17+ metrics | Yes | Yes | Yes | Yes |

| RAGAS | RAG pipelines | Context, faithfulness, relevancy | Yes (focused) | No | Yes | Yes |

| MLflow | Experiment tracking | QA, RAG, comparison | Yes | Partial | Yes | Yes |

| Opik | Full-stack LLMOps | LLM-as-judge + guardrails | Yes | Yes | Yes | Yes |

Figure: Comparison Table

DeepEval

DeepEval is one of the most widely adopted open source LLM evaluation frameworks, with over 400,000 monthly downloads. It was built with a developer-first mindset, treating evaluations like unit tests through a Pytest integration that lets you embed eval checks directly into your existing test suite.

- Key features and evaluation methods: DeepEval ships with over 14 built-in metrics covering RAG evaluation (contextual precision, recall, faithfulness, answer relevancy), agent evaluation (task completion, tool correctness), conversational metrics (knowledge retention, turn relevancy), and safety checks (bias, toxicity, hallucination). Its metrics are self-explanatory, which means when a score falls short, the tool tells you exactly why.

- Typical use cases and strengths: It’s the go-to choice for teams building RAG applications, chatbots, and LLM agents who want evaluation baked into their CI/CD pipeline from day one. The modular design makes it easy to mix and match metrics or create custom ones for your domain.

- Limitations: DeepEval leans heavily on LLM-as-a-judge scoring, which adds inference cost and latency at scale. It’s also primarily developer-centric and doesn’t include production monitoring or human-in-the-loop feedback workflows out of the box.

Best suited for teams that want evaluation integrated directly into CI/CD pipelines.

Langfuse

Langfuse has become one of the most popular open source LLM observability and evaluation platforms, particularly among teams that want full control over their stack. It’s self-hostable, integrates with major LLM frameworks, and provides end-to-end traceability from input to output.

- Key features and evaluation methods: Langfuse captures every detail of an LLM call automatically, including inputs, outputs, API calls, and latency. On the evaluation side, it supports LLM-as-a-judge metrics, human annotation workflows, custom test sets, and prompt versioning with a built-in playground. You can run both online (production) and offline (pre-deployment) evaluations from the same platform.

- Typical use cases and strengths: Langfuse is well-suited for organizations building their own LLMOps pipelines who need full-stack visibility and transparency. Its self-hosting option makes it a strong fit for teams with strict data residency or compliance requirements.

- Limitations: Setting up self-hosting requires DevOps effort. Framework support for newer AI development tools is more limited compared to some closed-source alternatives. Teams that need enterprise-grade features like advanced RBAC or SOC2 compliance may need to be upgraded to paid tiers.

Best suited for teams that need full visibility into production LLM behavior.

ArtificialQA

ArtificialQA is an end-to-end evaluation platform designed for QA teams working with conversational AI systems, positioned as enterprise QA for generative AI applications. It structures the entire testing workflow inside projects: design test cases, organize them into suites, run them against live agents via API, and evaluate responses with a three-layer scoring system.

- Key features and evaluation methods: The evaluation engine combines hard assertions (regex, exact match, JSON schema, numeric tolerance) that act as a gate before any LLM scoring runs, plus 17+ weighted LLM evaluators covering hallucination, precision, compliance, tone, and RAG-specific dimensions like groundedness and citation correctness. Every run captures full snapshots for reproducibility, and scores roll up into a weighted pass/fail verdict at both case and plan level.

- Typical use cases and strengths: It’s built for teams that need a structured QA cycle — not just a scoring script. Test case lifecycle management (draft → approved → deprecated), multi-environment agent connections, and evidence export make it practical for regulated industries where auditability matters. RAG pipeline testing is native, with document ingestion and test generation directly from the knowledge corpus.

- Limitations: The structured workflow (projects, suites, plans, connections) is more than most teams need for early-stage experiments. Better suited for teams running repeatable QA cycles than for ad-hoc evaluation.

Best suited for QA teams that need a full testing lifecycle for conversational AI agents, including RAG pipelines.

RAGAS

RAGAS (Retrieval Augmented Generation Assessment) is a lightweight, open-source toolkit built specifically to evaluate RAG pipelines. If your application involves retrieval, this tool was purpose-built for your use case.

- Key features and evaluation methods: RAGAS focuses on four core metrics: context precision, context recall, faithfulness, and answer relevancy. It can combine LLM-based scoring with classical NLP metrics like BLEU and ROUGE. One standout feature is the ability to generate synthetic test datasets from your documents, so you can start evaluating even without a labeled dataset ready.

- Typical use cases and strengths: RAGAS is the standard evaluation toolkit for RAG applications. If you’re building on LangChain, LlamaIndex, or similar frameworks, integration is straightforward. It’s completely free and open source under the MIT license.

- Limitations: RAGAS is narrowly scoped. It’s excellent for RAG but doesn’t cover conversational agents, general QA systems, or safety-focused evaluations. For teams to build anything beyond a retrieval pipeline, it needs to be supplemented with additional tools.

Best suited for RAG applications where retrieval quality directly impacts output accuracy.

MLflow

MLflow is the experiment tracking workhorse of the ML world, and it has extended its capabilities to cover LLM evaluation. If your team is already using MLflow for traditional machine learning, adding LLM evaluations to the same platform is a natural next step.

- Key features and evaluation methods: MLflow’s LLM evaluation module supports auto-tracing for major frameworks, including OpenAI, LangChain, LlamaIndex, DSPy, and AutoGen. It handles question-answering evaluation, RAG evaluation, and model comparison with built-in metrics. Every run is logged with hyperparameters, prompt versions, and metric results.

- Typical use cases and strengths: MLflow shines for teams that want a unified platform for both traditional ML and LLM workflows. Its model registry and reproducible experiment tracking make it particularly strong for teams that need to manage multiple model versions.

- Limitations: MLflow wasn’t originally designed for LLMs, and that shows. It lacks LLM-native features like real-time monitoring, conversational tracing, or advanced hallucination detection. For teams building LLM-first products, it often needs to be paired with a more specialized evaluation tool.

Best suited for teams already managing ML experiments who want to extend into LLM evaluation.

Opik

Opik is an open source LLM evaluation and observability platform built for end-to-end testing of AI applications. It extends Comet’s experiment tracking heritage with LLM-specific evaluation capabilities.

- Key features and evaluation methods: Opik logs detailed traces of every LLM call, supports automated LLM-as-a-judge metrics for factuality and toxicity, and includes guardrails for production safety like PII redaction and topic blocking. It integrates with CI/CD pipelines and supports both RAG evaluation and multi-turn agent evaluation.

- Typical use cases and strengths: Opik is a strong option for data science and ML teams already in the Comet ecosystem who want to extend their existing experiment tracking to cover LLM workflows. Its full-stack approach from tracing to evaluation to guardrails means you don’t need to juggle multiple tools.

- Limitations: Opik is developer-centric and primarily built for CI/CD and benchmarking. It has less support for collaborative, multi-stakeholder annotation workflows. Open-source deployments also require ongoing maintenance efforts for teams without dedicated DevOps resources.

If you are building a RAG application, start with RAGAS and pair it with Langfuse or Opik for traceability.

If you need a simple starting point, use DeepEval for CI/CD validation, RAGAS for retrieval evaluation, and Langfuse for production monitoring.

How to Choose the Right LLM Evaluation Tool for Your Use Case

Here’s the honest answer: there is no single tool that does everything well. The teams that get this right pick tools that match their architecture, their team’s workflow, and where they are in the development lifecycle.

- Start with your architecture. If you’re building a RAG application, RAGAS should be your first stop for pipeline-specific metrics. Pair it with Langfuse or Opik for full traceability, and you have a solid foundation.

- Match the tool to your development stage. Early in development, DeepEval’s unit-test-style approach helps you catch regressions fast. As you move toward production, you’ll want observability tools like Langfuse or Opik to monitor live behavior.

- Think about your team’s composition. If your team is mostly engineers who live in the terminal, DeepEval and RAGAS will feel natural. If you have product managers or domain experts who need to review and annotate outputs, look for tools with accessible UIs and human annotation workflows.

- Don’t ignore compliance requirements. If you’re in healthcare, finance, or any regulated industry, the ability to self-host your evaluation infrastructure is a baseline requirement, not a nice-to-have. Both Langfuse and Opik support this well.

- Build toward a stack, not a single tool. The most effective teams combine a benchmarking tool for pre-deployment model selection, an application-level framework for CI/CD evaluation, and an observability platform for production monitoring. Each layer catches different failure modes.

The key question to ask before committing to any tool: Can this tell me, specifically, why my model failed in that test case? If the answer is yes, you’re in the right place.

Conclusion

The real question is not whether your model will fail. It will. The question is whether you will see it coming.

Most teams find out something is broken when a user tells them. By then, the damage is done, and you are debugging in production with real consequences on the line.

The right evaluation setup gives you three things before that happens: visibility into where your model struggles, consistency checks across every prompt or model change, and enough confidence in what you ship to stand behind it.

Ready to move from evaluation theory to a setup that holds up in production?

Discover how our QA Consultancy Services can help you design, implement, and scale a robust LLM evaluation strategy.