Speed has become the currency of modern software delivery. Product teams are expected to release faster, iterate constantly, and respond immediately to market demands. Roadmaps are aggressive. Competition is fierce. Stakeholders want results—now.

In this environment, skipping QA can feel like a practical decision. If testing takes time, removing or reducing it seems like the fastest way to meet a deadline.

But the consequences of skipping QA are rarely visible in the first few days after release. They appear gradually—through bugs, rework, lost trust, and slowed momentum. What looks like acceleration is often the beginning of long-term instability.

Let’s explore why teams make this choice, what truly happens when they do, and how to maintain delivery speed without sacrificing quality.

Why Teams Keep Skipping QA Under Delivery Pressure

Most organizations don’t skip testing because they don’t value quality. They do it because they feel cornered.

Here are the most common triggers:

1. Unmovable deadlines – A launch date tied to marketing campaigns or client commitments.

2. Budget pressure – QA is mistakenly seen as an optional cost instead of a risk mitigation investment.

3. Last-minute feature additions – Scope expands, but timelines don’t.

4. Overreliance on developers’ self-testing – Assuming “it works on my machine” is enough.

5. Heavy trust in automation alone – Believing automated checks fully replace human validation.

6. Skipping functional test cycles to gain a few extra days before deployment.

In these moments, reducing or skipping QA seems like the least painful compromise.

But it rarely stays painless.

The Immediate Consequences of Skipping QA Before Release

The short-term consequences of skipping QA usually surface within days—or even hours—after deployment.

1. More Production Defects

When teams rush releases or skip validation, defects reach real users. These can include:

- Broken login flows

- Payment processing failures

- Incorrect calculations

- Integration errors

- Security gaps

When you’re skipping functional test phases, you’re essentially transferring risk from your QA environment to your customers.

And fixing issues in production is always more expensive than fixing them during development.

2. Emergency Hotfix Cycles

A rushed release often leads to:

- Incident triage calls

- Emergency patching

- Rollbacks

- Urgent redeployments

Time saved by skipping QA is quickly consumed by crisis management. What looked like a one-week acceleration becomes two weeks of damage control.

3. Increased Support and Customer Complaints

The consequences of bad testing are immediately visible in:

- Higher support ticket volumes

- Negative user feedback

- Customer frustration

- Escalations to management

Users don’t see internal pressure—they only see product reliability. Even minor defects can damage their perception of your brand.

4. Team Stress and Burnout

Developers and product teams often pay the hidden cost. Late-night fixes, weekend deployments, and constant instability create a reactive culture.

The short-term gain becomes a long-term morale problem.

The Hidden Long-Term Costs of Bad Testing

While production bugs are painful, the deeper damage accumulates over time.

1. Technical Debt That Slows Future Releases

Repeatedly skipping QA introduces fragile features and incomplete validation. Over time:

- Regression coverage becomes weak

- Legacy bugs remain unresolved

- Code complexity increases

- Releases require longer stabilization periods

Ironically, skipping QA to move faster today makes every future release slower.

2. Reduced Predictability

When quality is inconsistent, leadership loses confidence in delivery timelines. Sales teams hesitate to promise features. Clients question stability.

The consequences of skipping QA extend beyond engineering—they impact revenue forecasting and business credibility.

3. Reputation and Compliance Risk

In regulated industries, consequences of bad testing can include:

- Compliance violations

- Security breaches

- Data integrity issues

- Legal exposure

A single serious production incident can undo years of brand-building effort.

4. Slower Innovation

Instead of building new capabilities, teams spend time fixing old problems. Innovation capacity shrinks because resources are tied up in rework.

This is one of the most underestimated consequences of skipping QA: lost opportunity.

Why Skipping QA Actually Reduces Delivery Speed

The idea that skipping QA increases speed only works if you measure speed in days—not in months or years.

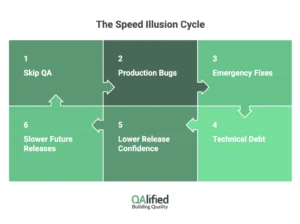

Here’s what typically happens:

- A feature is released early.

- Bugs are discovered in production.

- Emergency fixes are developed.

- New bugs appear due to rushed patches.

- Regression risks increase.

- Future releases require more caution and validation.

In stable environments, defects are caught during development or QA—where the cost and disruption are lower. In unstable environments, defects are found by users—where the impact multiplies.

Fixing a bug in development might take hours. Fixing it in production may involve investigation, coordination across teams, communication with customers, and multiple deployments.

That is not faster delivery. It is delayed delivery disguised as urgency.

Skipping functional test validation today often results in longer release cycles tomorrow because confidence decreases and risk increases.

True agility requires stability.

This cycle explains why organizations that consistently invest in testing often outperform those that cut corners.

Sustainable speed is built on controlled quality—not avoided testing.

How to Balance Speed and Quality Without Skipping QA

The solution isn’t to test endlessly. It’s to test intelligently.

Here’s how organizations maintain velocity without sacrificing reliability:

1. Shift Quality Left

Integrate QA early in the development lifecycle:

- Review requirements collaboratively

- Define acceptance criteria clearly

- Design test cases before coding starts

- Automate unit and integration tests early

When testing begins earlier, issues are detected sooner—at a lower cost.

2. Apply Risk-Based Testing

Not every feature requires the same depth of testing.

Prioritize:

- Core revenue-generating flows

- Security-sensitive components

- Customer-facing interfaces

- High-traffic functionalities

This allows teams to optimize coverage without slowing down delivery.

3. Combine Automation with Human Insight

Automation accelerates regression cycles, but it cannot fully replace human exploration.

Balance is key:

- Automated regression suites for stability

- API testing for integration reliability

- Performance testing for scalability

- Exploratory testing for edge cases

Automation increases speed. Human expertise increases intelligence.

4. Avoid Skipping Functional Test Validation

If timelines are tight, reduce scope—not quality.

Instead of skipping functional test phases:

- Release smaller increments

- Prioritize critical features

- Improve parallel testing processes

- Optimize test environments

Skipping QA introduces uncontrolled risk. Managing scope preserves stability.

5. Leverage Independent QA Expertise

An external perspective can significantly improve objectivity and efficiency. Many growing organizations choose to outsource software testing to scale quality practices without overloading internal teams.

Working with a specialized partner provides:

- Mature testing methodologies

- Scalable automation frameworks

- Industry best practices

- Faster onboarding of testing capacity

At QAlified, our QA consultancy services help companies design testing strategies aligned with rapid delivery goals. We focus on optimizing quality processes so speed becomes sustainable—not risky.