Artificial intelligence is no longer experimental. AI-driven software is now embedded in customer support platforms, recommendation engines, healthcare systems, financial services, and enterprise automation.

Yet while software has evolved dramatically, many quality assurance practices have not.

This gap explains why traditional QA fails in modern environments. Traditional QA was built for deterministic, rule-based systems. AI-driven applications are probabilistic, adaptive, and constantly changing. Applying legacy QA models to AI systems creates blind spots, risks, and missed opportunities.

In this article, we’ll explore why traditional QA fails, examine traditional QA common mistakes, unpack the biggest challenges in QA testing today, and outline what organizations must do instead. Most importantly, we’ll show how modern QA evolves from simple defect detection into quality intelligence that enables smarter business decisions and scalable AI systems.

AI-Driven Software Has Changed the Rules of Quality

Traditional software follows predictable logic:

- Given the same input, it produces the same output.

- Business rules are explicit.

- Behavior changes only when code changes.

AI-driven software breaks all three assumptions.

Machine learning models learn from data, adapt over time, and often behave differently depending on context. Two identical inputs may generate different outputs based on confidence scores, probabilities, or updated training data.

Key Differences Between Traditional and AI-Driven Software

Comparative Chart: Traditional vs AI-Driven Systems

| Dimension | Traditional Software | AI-Driven Software |

|---|---|---|

| Behavior | Deterministic | Probabilistic |

| Logic | Rule-based | Model-based |

| Output | Predictable | Variable |

| Change Trigger | Code updates | Data & retraining |

| Testing Focus | Functional correctness | Accuracy, bias, reliability |

| Failure Modes | Bugs & regressions | Drift, hallucinations, bias |

This shift fundamentally changes what quality means. Testing is no longer about “does it work?” but:

- Does it behave responsibly?

- Is it accurate over time?

- Is it explainable and trustworthy?

- Does it degrade safely?

These realities introduce new challenges in traditional QA testing that legacy processes were never designed to handle.

Why Traditional QA Fails in Modern AI Systems

To understand why traditional QA fails, we need to look at its original design goals and revisit what is qa testing in its traditional form.

Traditional QA focuses on:

- Verifying predefined requirements

- Executing scripted test cases

- Catching functional defects

- Preventing regressions

This approach works well for static systems. AI systems, however, are dynamic by nature.

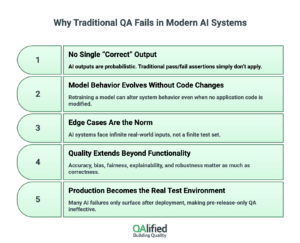

Core Reasons Traditional QA Breaks Down

- No Single “Correct” Output

AI outputs are probabilistic. Traditional pass/fail assertions simply don’t apply. - Model Behavior Evolves Without Code Changes

Retraining a model can alter system behavior even when no application code is modified. - Edge Cases Are the Norm, Not the Exception

AI systems face infinite real-world inputs, not a finite test set. - Quality Extends Beyond Functionality

Accuracy, bias, fairness, explainability, and robustness matter as much as correctness. - Production Becomes the Real Test Environment

Many AI failures only surface after deployment, making pre-release-only QA ineffective.

These factors explain why traditional QA fails repeatedly when applied to AI-driven platforms.

Traditional QA Common Mistakes in AI-Driven Testing

When teams apply legacy QA thinking to AI systems, they fall into predictable traps. These traditional QA common mistakes increase risk and create false confidence.

The Most Common Mistakes

Traditional QA Common Mistakes

- Treating AI Models Like Deterministic Code

Expecting consistent outputs ignores probabilistic behavior. - Over-Reliance on Static Test Data

Fixed datasets fail to represent real-world variability and evolving user behavior. - Ignoring Data Quality and Bias

Many QA teams test the application but not the training or inference data. - No Monitoring After Release

Once deployed, AI systems drift — without monitoring, quality silently degrades. - Functional-Only Validation

Teams validate workflows but skip ethical, fairness, and explainability testing. - Manual Testing at Scale

Human-driven testing cannot keep up with AI complexity or release velocity.

These traditional QA common mistakes explain why organizations experience AI failures even when “all tests passed.”

Key Challenges in QA Testing for AI-Based Applications

AI introduces a new category of risk that spans technology, data, and business outcomes. These are the real-world challenges in QA testing teams face today.

Top Challenges in QA Testing

Infographic Summary: Challenges in QA Testing

- Model drift over time

- Data bias and representativeness

- Lack of explainability

- Non-deterministic outputs

- Ethical and regulatory risks

- Monitoring quality in production

- Scaling validation across datasets and models

Why These Challenges Are Hard

Unlike traditional applications, AI systems:

- Fail silently

- Degrade gradually

- Produce “acceptable-looking” wrong answers

- Can harm trust before bugs are detected

These realities compound the challenges in traditional QA testing, forcing teams to rethink both tooling and mindset.

What to Do Instead: Rethinking QA for AI-Driven Software

If traditional QA doesn’t work, what replaces it?

The answer is not “more test cases.” It’s a modern QA strategy designed for AI systems — one that treats quality as continuous, data-driven, and business-aligned.

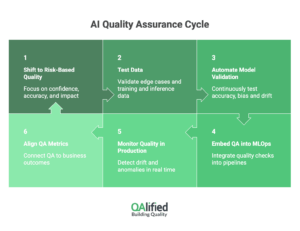

A Modern QA Approach for AI

Step-by-Step Framework

- Shift from Pass/Fail to Risk-Based Quality

- Measure confidence, accuracy ranges, and impact severity.

- Test Data, Not Just Code

- Validate training data, inference data, and edge cases.

- Automate Model Validation

- Continuously test accuracy, bias, and drift.

- Embed QA into MLOps Pipelines

- Quality checks before, during, and after deployment.

- Monitor Quality in Production

- Detect drift, anomalies, and degradation in real time.

- Align QA Metrics with Business Outcomes

- Customer trust, compliance, revenue impact.

- Customer trust, compliance, revenue impact.

This approach directly addresses why traditional QA fails by replacing static validation with continuous intelligence.

The Foundations of AI-Ready Quality Assurance

AI-ready QA is not a tool — it’s a capability. Organizations that succeed build quality into the entire AI lifecycle.

Core Capabilities of AI-Ready QA

Comparative Chart: Traditional QA vs AI-Ready QA

| Capability | Traditional QA | AI-Ready QA |

|---|---|---|

| Test Design | Scripted cases | Scenario & data-driven |

| Automation | UI & API | Model & data validation |

| Monitoring | Pre-release | Continuous |

| Metrics | Defects | Accuracy, bias, drift |

| Risk Management | Functional bugs | Business & ethical risk |

| Decision Support | Limited | Strategic insights |

Best Practices for AI-Ready QA

Infographic: AI-Ready QA Best Practices

- Continuous validation

- Explainability testing

- Bias detection

- Real-world data simulation

- Automated monitoring

- Cross-functional collaboration

These foundations transform QA from a bottleneck into a strategic enabler.

From Quality Control to Quality Intelligence

Traditional QA answers the question: “Is the software broken?”

Modern QA answers a more powerful question: “Is the system trustworthy, scalable, and aligned with business goals?”

This shift marks the evolution from quality control to quality intelligence.

What Is Quality Intelligence?

Quality Intelligence is the use of data, analytics, and AI-driven insights to proactively improve software quality. Instead of only detecting defects after they occur, Quality Intelligence analyzes patterns, risks, and performance metrics to predict issues, optimize testing efforts, and support smarter decision-making across the development lifecycle.

Quality intelligence combines:

- Testing data

- Production metrics

- User behavior

- Business KPIs

Instead of reporting defects, QA provides insights that inform:

- Product decisions

- Model retraining strategies

- Risk mitigation

- Customer experience improvements

Business Impact of Quality Intelligence

Benefits Summary

- Faster AI releases with lower risk

- Improved customer trust

- Better regulatory compliance

- Reduced operational surprises

- Long-term scalability

This evolution directly addresses the modern challenges in QA testing while unlocking new value beyond bug detection.

For teams still defining the basics, we recommend reviewing QA consultancy services and how its role has expanded in today’s AI-driven landscape.

Build AI-Ready QA with QAlified

AI-driven software demands a new definition of quality. The reality is clear: why traditional QA fails is no longer a theoretical discussion — it’s a daily operational risk.

By understanding traditional QA common mistakes, addressing modern challenges in traditional QA testing, and adopting AI-ready quality practices, organizations can move from reactive testing to proactive intelligence.

At QAlified, we help organizations modernize their QA strategy for AI-driven systems — combining deep testing expertise, automation, and quality intelligence.

Because in the age of AI, quality isn’t just about finding bugs — it’s about building trust.