Surf Life Saving Queensland (SLSQ) is an organization dedicated to aquatic rescue and safety across 8,000 kilometers of coastline in Australia, with the support of 34,000 volunteers.

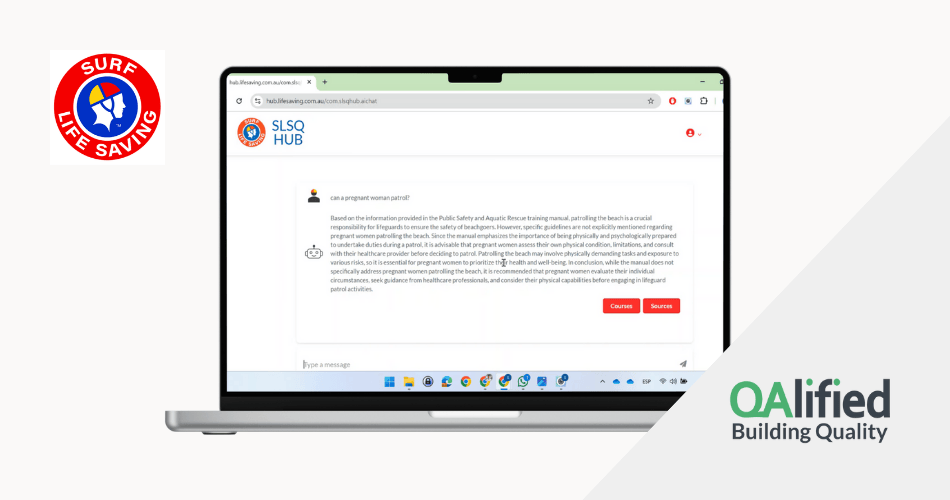

SLSQ Hub is an AI-powered platform that centralizes inquiry management and provides fast access to key information about memberships, training, rescue awards, and procedures.

Scope

The QAlified team was responsible for validating the accuracy, reliability, and security of the AI system used in the SLSQ Hub. Extensive testing was carried out to ensure the AI model delivered accurate responses, aligned with SLSQ’s operational policies, and free from biases that could affect service quality.

The Testing and QA activities included:

From the beginning, the QA team worked closely with developers, enabling a deep understanding of how the AI solutions were designed and allowing test cases to evolve alongside the system.

A comprehensive set of test cases was designed, including:

- Standard scenarios based on expected questions and answers provided by SLSQ.

- Edge cases created by the QAlified team to assess system robustness, such as:– Ambiguous queries or those with partial information.– Questions involving unusual combinations of memberships and certifications.– Requests for information about non-existent events or courses.

To optimize validation, we implemented ArtificialQA, our test automation tool, which allowed us to:

- Run automated API-based tests, reducing execution time.

- Compare system-generated answers with expected responses.

- Analyze answers across multiple dimensions, including:– Formality and tone.

– Accuracy of the information.

– Detection of biases or unexpected deviations.

Automated testing accelerated validation cycles and gave developers detailed insights to improve response generation. This led to fine-tuning of AI models, improving both accuracy and reliability.

Technologies and tools

- RAG testing for validating generated responses

- QA dataset-based evaluation (expected Q&A sets)

- API-based test automation

- Answer analysis: formality, coherence, and bias detection

- Vector databases for information storage

Results

- Over 120 test cases were designed and executed, combining manual and automated validations.

- Test execution time was reduced by more than 60% thanks to automation, allowing for quick validation of system adjustments.

- System accuracy improved by nearly 30% after iterative improvements based on testing feedback.

- Biases in certain responses were identified and corrected, enhancing the quality of information provided to volunteers.

- Continuous validation enabled the delivery of detailed reports to developers, facilitating progressive improvements to the AI system and ensuring a more reliable service for SLSQ.